Notes on Building Conversations Support for Chat-CLI

I've spent a bit of time over the past couple of days thinking more critically about how I'd like to evolve my Chat-CLI project. One of the capabilities I've been thinking about for some time now is the ability to save chats and reload them in the future. This seems to be pretty common for most of the major LLMs applications out there like claude.ai.

There's a number of ways to do this. I could create a local database using SQLite, and store log data there. I could also create a remote/cloud based database and an API for it. I could just write code to query and parse Amazon Bedrock's log data via the CloudWatch API.

Eventually I sort of landed on a mix of all of the above.

Here's my current thinking:

- I'd like people to be able to just download the CLI program and start chatting with LLMs on Amazon Bedrock. You can do this right now (as long as you have enabled Model Access and permissions correctly), and I don't want this to change.

- I figure, if you have already gone through the trouble to create an AWS account, set up Model Access and Permissions it's probably not a huge leap to open up access to other things.

- Amazon Bedrock has built-in support for logging model invocations to CloudWatch and/or Amazon Simple Storage Service (S3)

- I like event driven architectures, so…

Here is my current plan:

- Build an easily deployable serverless/event driven architecture that leverages Amazon Bedrock's Invocation Logs stored on S3. (I'll do this first)

- Build it as a separate, optional architecture anyone can download and configure. (There's gonna be a few pre-reqs)

- Have a fallback of using SQLite for local storage (But, I should probably build this first.)

As you can see there are several roadblocks to fruition here. I need to figure out how to deal with local storage for a command line program in Go. I need to learn how to deal with persistent local config in Go, and I need to work out how to build the arch on AWS as described above. The last bit is actually the easiest for me.

Things I've learned so far:

- There is a property called "requestMetadata" for all the Amazon Bedrock Invoke and Converse APIs. It lets you add any number of key-value pairs to the request body. These get stored in the logs, and I can use them to tie together logs from the same chat sessions.

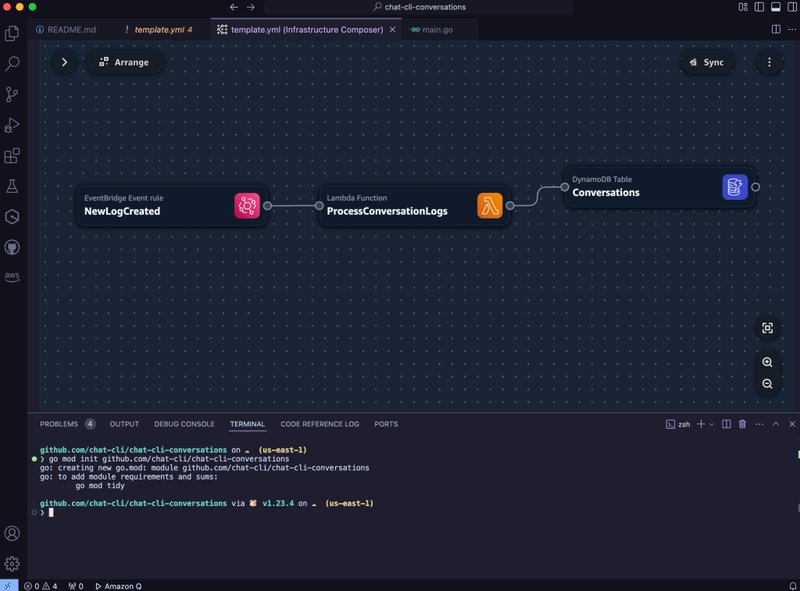

- I really love AWS Infrastructure Composer for quickly prototyping ideas right in VS Code.

- I really like using claude.ai alongside my code editor. I dunno why, but it seems like having an AI assistant in a separate application is a nice thing. I get the Conversation history, and can pick up where I left off on my iPhone. I've had several instances where an idea popped in my head, and I used claude.ai on my phone to get something started, which I expanded on later at my desk.

- There is a nice feature of the claude.ai desktop app for macOS where you can hit a hotkey to get a small input window quickly.

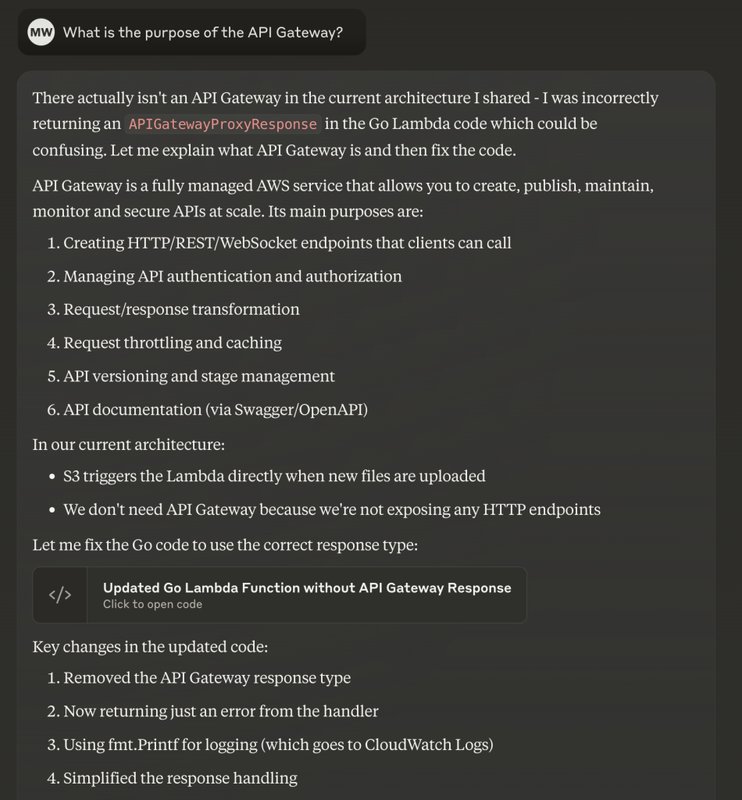

- Claude is getting better at admitting mistakes and having an actual discussion with me vs. just taking orders and making things.

More soon!